Reinforcement Learning (RL) based Energy Optimization

CPG Multi-national wanted to aggressively reduce the energy consumption and CO2 emissions at their factories, as part of their global sustainability goals.

Heating, Ventilation and Air Conditioning (HVAC) units are responsible for maintaining the temperature and humidity settings in a building. Studies have shown that HVAC accounts for almost 50% energy consumption in a building and 10% of global electricity usage. HVAC optimization thus has the potential to contribute significantly towards sustainability goals, reducing energy consumption and CO2 emissions.

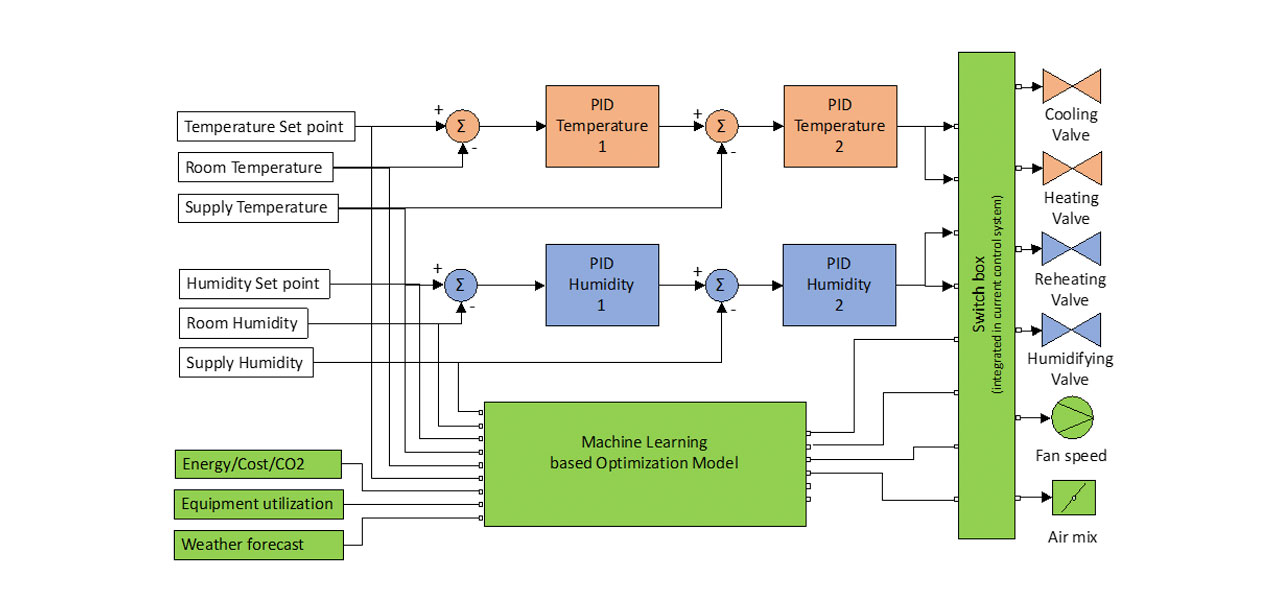

We designed a Reinforcement Learning (RL) based energy optimization model to control the factory’s HVAC units. The RL model is deployed at the factory edge providing near-real time setpoint value recommendations to the HVAC units. The RL based HVAC controller is able to learn and adapt to real-life factory settings, without the need for any offline training. Benchmarking results show the potential to save up to 25% in energy efficiency over the previously used Proportional–Integral–Derivative (PID) controllers.

Solution

Designed a Reinforcement Learning (RL) based energy optimization model to control the Factory HVAC units.

Edge deployment of the RL model providing near-real time setpoint value recommendations to the HVAC units.

Cloud storage of I/O values to monitor and refine models.

Technology Stack

The streaming architecture consisted of connecting the HVAC PLCs with a Kepware Server, which acted as a producer transmitting the temperature and humidity sensor readings over MQTT. The Reinforcement Learning (RL) model acted as the MQTT consumer returning the recommended HVAC control (valve opening) values. The RL model training and deployment was performed on the AWS Sagemaker platform. The sensor readings and RL output values were persisted in an Influx database for batch analysis and model re-training.

Business Value Outcome

The manufacturing models for industry 4.0 will not only rely on clean energy but also the optimization of energy consumption. This project was able to deliver:

- Solution has been deployed at 5+ factories so far (planned to cover all 40 factories by Q4 2021).

- Delivered around 25% energy savings within 3 months of deployment, in comparison to the previously used Proportional–Integral–Derivative (PID) controllers.

Edge AI Potential

Discover the real value that Edge AI technology can bring to your business

Contact