Automotive Security and Safety Application

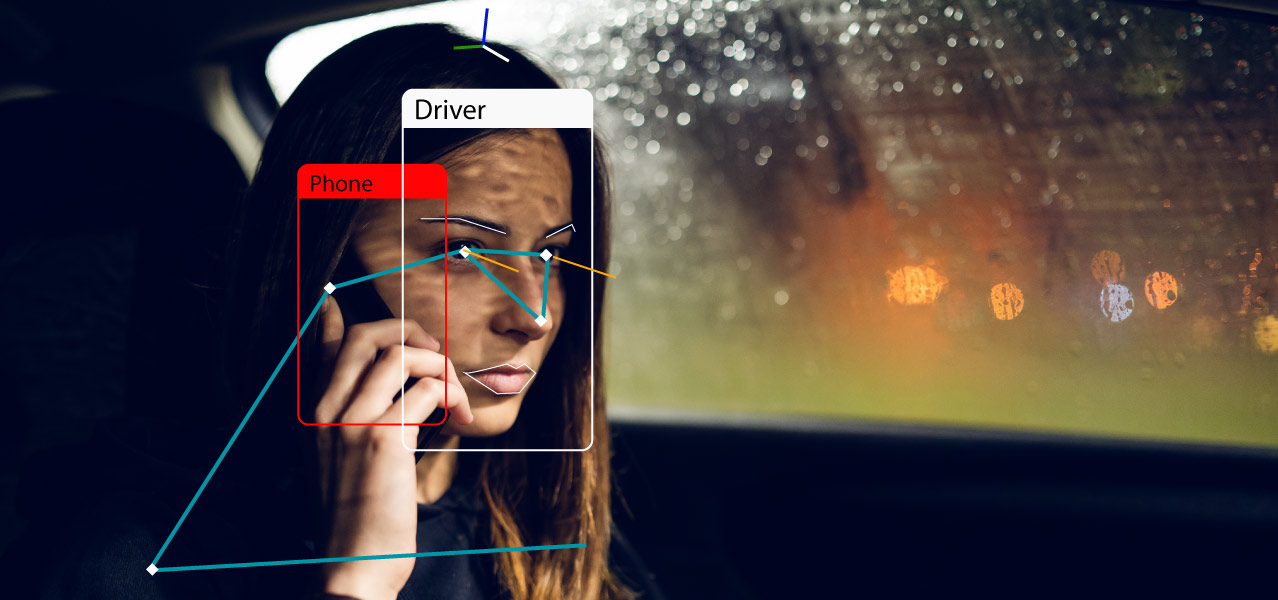

An OEM in automotive industry wanted a complete camera-based automotive solution for improved driver and passenger safety and security. The task of the camera-based application was to use machine learning techniques in computer vision to detect driver and passenger presence, seat occupancy, and follow their behavior, emotions and attentiveness during the drive. The driver’s drowsiness, distraction by in-cabin events, and watchful follow-up of on-the-road events are detected and reported in real time. Our engineering team built a solution that orchestrates state-of-the-art computer vision algorithms and optimizes their execution on embedded platforms. The target deployment platform was chosen following the automotive industry requirements and standards, especially addressing accuracy, reliability, latency and throughput.

Solution

Identified critical AI components (e.g., face detection, face recognition, face-landmarks detection, eye-gaze, head-pose estimation, body-pose detection, object detection, age and gender classification) crucial for the solution integration. Adapted state-of-the-art AI components and tailored them for the execution on embedded boards. Performed benchmarks of the chosen components and optimized critical sections of their execution.

Designed and implemented the embedded solution based on a scalable architecture orchestrating the AI components in real-time. Combined the basic AI components within a multi-tasking framework to define the data-flow graph of the application. Implemented several SW layers assigned for video signal processing and reasoning. Fulfilled design requirements with respect to the processing latency and the number of processed frames per second.

Identified performance and accuracy drawbacks related to the specific field of algorithm application, not necessarily well-aligned with a generic field, main-stream models, and the training data they are based-on. Prepared and defined dozens of data-collection scenarios to address the original model drawbacks. Performed data collection with real in-car persons to enlarge available data-sets and improve the in-the-field performance.

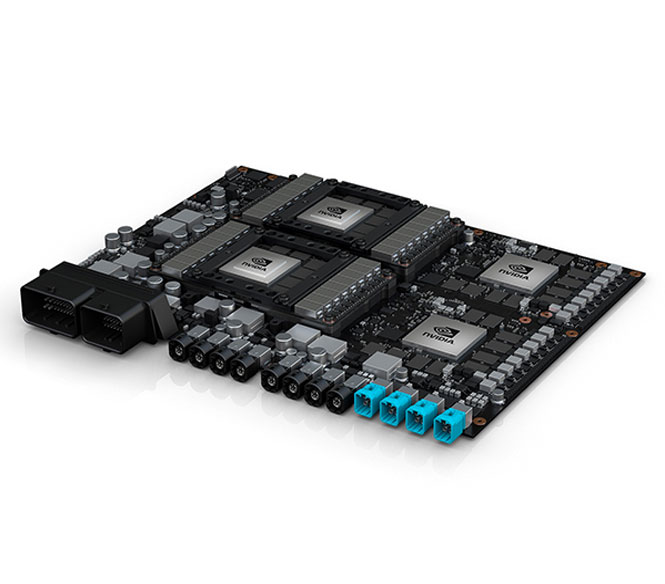

Refined and retrained models to meet better stringent KPRs. Used quantization and optimization techniques to find sweet spots in the performance-cost curves. Guaranteed optimal execution on target platforms, using heterogeneous computing resources available (CPUs, GPUs, and dedicated neural network processing units, also known as deep-learning accelerators).

Developed supporting applications for facilitating data-collection and annotation. Implemented data-base back-end to store the collected data and annotations. Concocted methods for maintaining the quality of the collected data. Built mobile applications and the back-end responsible for on-boarding drivers/passengers, allowing users to create relations between their identities and facial fingerprints.

Technology Stack

Bonseyes LPDNN (Low-Power Deep Neural Networks) inference engine designed and built for embedded solutions and their power and complexity constraints; nVidia AI development platforms, powerful prototyping boards with heterogeneous computing resources accessible through leveraging nVidia CUDA and TensorRT libraries; Python machine learning ecosystem for rapid-prototyping and ML model development (PyTorch); C/C++ embedded languages providing optimal execution performance without any compromise; standard mobile and back-end technologies for helper applications (iOS Swift, Flask); other inference engines supporting the model transfers, comparisons, and benchmarking (ONNX Runtime, NCNN); visual libraries for GUI (Qt).

Business Value Outcome

Onboard driver distraction monitoring can greatly reduce serious and critical accidents involving drivers, pedestrians and cyclists. This use case was able to demonstrate:

- In-car deployed and tested real-time solution for driver monitoring and occupant detection

- Demo application successfully delivered to the customer and deployed in their testing cars

- Project moving towards production phase

Edge AI Potential

Discover the real value that Edge AI technology can bring to your business

Contact